Accelerated networking is something I’ve previously suggested in my 7 ways to optimize your Azure VM performance post.

In short, this removes the virtual switch from the host allowing the NIC to forward network traffic directly to the virtual machine. The result of this is lower latency over the Virtual Network but it’s important to note that currently this is really only beneficial for multiple Virtual Machines communicating over the same Virtual Network. If using VNET peering or Virtual Network Gateways the improvements are negligible.

I decided to put this to the test in my lab and see what kind of a difference it actually makes.

For my environment I deployed two DS2v2 Virtual Machines from the Azure marketplace running Windows Server 2016 as the Operating System. These were deployed in the North Europe region and I used a Premium SSD 128GB managed OS disk. I didn’t deploy using availability sets, availability zones or proximity placement groups (more on this later).

Most importantly for my baseline test I decided to deploy these Virtual Machines without accelerated networking enabled. I also didn’t make any configuration changes to the OS, I just used the base image configuration.

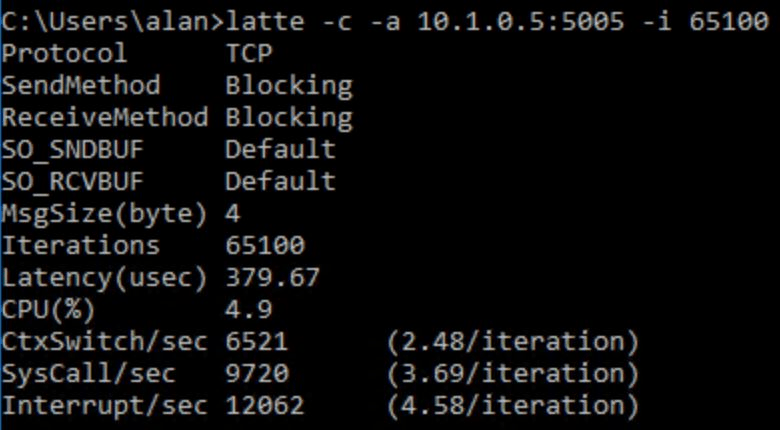

In order to test the latency between my two Virtual Machines I used latte.exe which is the tool recommended by Microsoft for latency testing on Windows. This is a simple enough setup where one host acts as a sender and the other as the receiver and the latency is measured between the two over a number of iterations. This tool measures TCP or UDP traffic as opposed to ICMP (ping) so it’s a more realistic latency test.

I ran the test fives times and the lowest latency score I received with my default setup was a latency of 380 usec (microseconds) – that’s 380 millionths of a second.

Next, I wanted to see what kind of latency I would get with advanced networking enabled on both of my Virtual Machines. This feature can be enabled by using some PowerShell but you first need to deallocate your Virtual Machines.

$nic = Get-AzNetworkInterface -ResourceGroupName "myResourceGroup" -Name "myNic"

$nic.EnableAcceleratedNetworking = $true

$nic | Set-AzNetworkInterface

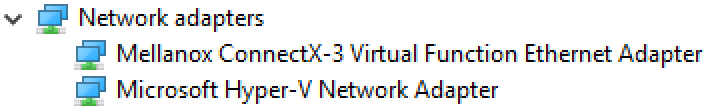

Once accelerated networking is enabled you simply start your Virtual Machines again. You will now have a new network adapter installed. Your virtual NIC will also report accelerated networking as enabled now.

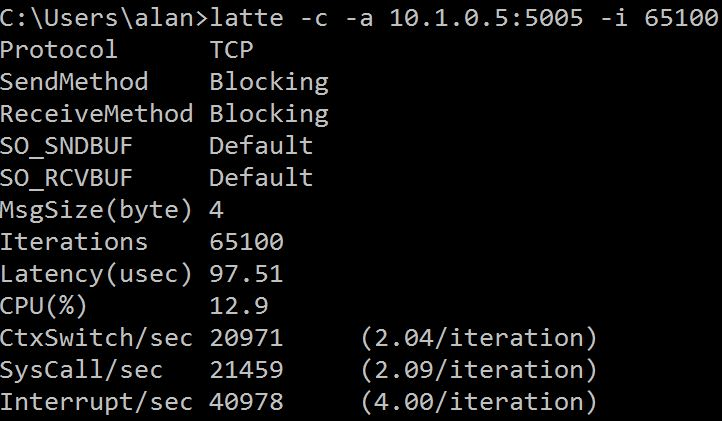

Now I just performed the exact same latency tests as before using latte.exe. This time my lowest latency score from five tests was 97.5 usec which is approximately 4 times faster than when accelerated networking was disabled.

I also noticed that I was getting a much more consistent result with accelerated networking enabled. All of my results were very close to the 100 usec mark whereas with accelerated networking disabled I was seeing results ranging from 380 to over 600 usec.

A clear improvement and this feature does not cost you anything. If deploying new Virtual Machines via the Azure portal accelerated networking seems to be enabled by default nowadays but for older deployments you may want to consider enabling it as outlined earlier in this post.

Note: Accelerated networking is limited to certain Operating Systems and Virtual Machines sizes. Links below for Windows and Linux.

Proximity Placement Groups

Following my own advice from my earlier referenced blog post, I decided to try moving my two Virtual Machines into a proximity placement group. This can be created and applied either during or post Virtual Machine deployment. Post deployment will require deallocating the Virtual Machines, adding them to the same proximity placement group and starting the Virtual Machines again.

After performing this step I didn’t find any noticeable improvement in my latency tests, however this simply means my two Virtual Machines were already located close together physically within the same Azure datacenter, possibly even on the same host cluster.

Best practice would be to use proximity placement groups for multi-tiered applications. It may not always make a noticeable difference immediately but if any of your Virtual Machines ever need to move to a different host then without using proximity placement groups there is a chance your Virtual Machines could be moved further apart physically increasing network latency to some extent. A change of host may occur for a number of reasons such as Virtual Machine redeployment, auto-recovery processes, host failure, planned or unplanned maintenance etc.

Using proximity placement groups allows you to have some level of control over this so it’s worth configuring this especially as it also comes at no extra cost.

Everyone wants to talk about how to enable accelerated networking, it’s benefits, and in your case where it does not really help.

I submit everyone is focusing on the wrong question. The real question is “why not” enable accelerated networking? There is no cost to enabling this feature, and as everyone has shown (over and over), it yields benefits in the right scenarios.

So, is there any reason at all not to just enable this feature for any VM that supports it? Any downsides whatsoever?

I would love to see somebody write a researched article on this question, instead of treading the hard-packed ground.

LikeLiked by 1 person

Your performance metrics match what we are seeing, thanks for posting this.

LikeLike