Welcome to part 4 of my well-tempered Azure tenant series for MSPs. We have already begun our journey of managing our customer’s Azure tenants with Azure Backup. Let’s switch focus now to governance and discuss how we can use Azure Policy to put in place some guardrails for our customers. This is not only good practice but will also help ensure that we have consistent governance across our customer base rather than taking an ad hoc approach.

Part 4: Azure Policy

This post won’t be so much of a what is Azure policy, I will assume that you already know what this is and what it is used for. Instead I want to focus on how we can manage Azure policies across multiple customers at scale.

I’ve mentioned already in part 2 how Azure Blueprints can be used to deploy RBAC roles and policies and for new customers and this is definitely how I recommend to assign your baseline policies right from the start. Policies must be scoped to either a management group, a subscription or resource group so there is no way to deploy and assign policies across multiple tenants all at once using the Azure portal.

If this is required then you could use a script such as this example to iterate through your delegated customer subscriptions and create policy assignments or deployments.

At a larger scale, I do recommend to consider designing your policies using infrastructure-as-code and even using DevOps to push out your policy changes through Azure pipelines or GitHub Actions. This will provide you with better source control and a more centralised approach to defining, testing and deploying your policies.

This will be not the focus of this post however as it would likely have to constitute a blog series of its own to cover everything!

Policy example using the Azure portal

Let’s run through an example of assigning a policy using the Azure portal to a single subscription belonging to one of our delegated customers.

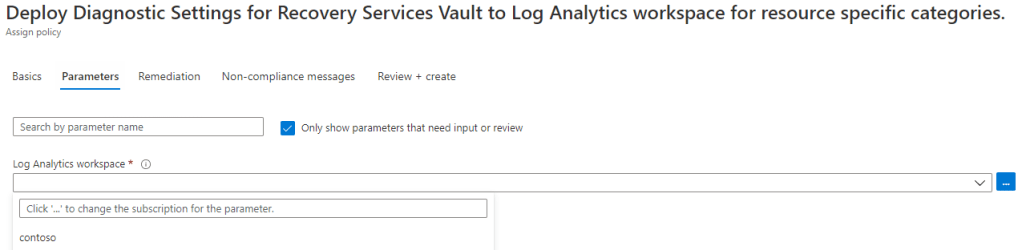

In my last post I mentioned a useful policy for Azure Backup. This policy is titled Deploy Diagnostic Settings for Recovery Services Vault to Log Analytics workspace for resource specific categories and the purpose of this policy is to configure the required Recovery Services Vault diagnostics settings for Azure backup reports. As mentioned in the previous post, I recommend that each customer has their own Log Analytics workspace deployed on their own Azure tenant. This can be used for centralised monitoring purposes for multiple resources so let’s assume this has been deployed already for the below example.

Once we select the policy that we want to assign, you will see that we can choose any of our Azure Lighthouse delegated customer subscriptions as the scope for the policy.

In the parameters section for this policy we will need to choose the Log Analytics workspace for the diagnostics logs to be sent to so make sure to choose the workspace on the customer tenant.

This particular policy has a DeployIfNotExists effect available which means that the configuration will be deployed to any newly created Recovery Services Vaults in the selected subscription scope. If you have Recovery Services Vaults already deployed then I recommend to create a remediation task. This will then attempt to add the configuration settings to existing resources in the selected subscription scope.

Remediation tasks

In order for remediation tasks to work, you will need to either specify an existing user-assigned managed identity on the customer tenant or else create a new system-assigned managed identity for this policy. If you intend to use a system-assigned managed identity, then you will have to deploy your policy with a delegatedManagedIdentityResourceId property in order for the delegated permissions to be correctly assigned. This is currently only possible by deploying with an ARM template through the API and therefore is currently not supported by the the Azure portal. More on this here.

My preference is to use a user-assigned managed identity instead however. This allows you to re-use a single managed identity resource for all of your Azure policy management tasks.

If you refer to part 2 of this series, you will note that it can be a good idea to deploy a user-assigned managed identity at the time of onboarding your customers to Azure Lighthouse. This can be useful for Azure Blueprint deployments but also for these remediation tasks as it will already have the required Owner permissions on the subscription which means it will have enough permissions to execute any of these remediation tasks also.

In this particular example the managed identity requires the Monitoring Contributor and Log Analytics Contributor permissions and I have selected a user-assigned managed identity on the customer tenant.

Policy monitoring

This one is pretty straightforward, you can use the Azure portal to track all of your remediation tasks across all customers. This is another example of why naming your Azure subscriptions after your customers is a good idea!

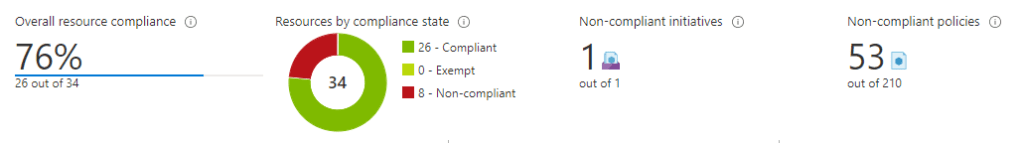

In terms of checking compliance, you can filter by customer or even review your overall compliance score across all customers in a single view.

We now have an efficient way of managing our policies across customers at scale.

Which policies?

I was going to put together a list of some recommended policies that I like to suggest for good governance but instead I will refer you to a great blog post recently by Vukašin Terzić which has some great suggestions. You will find his post here.

What’s next?

In the next post, I will cover Azure Monitor and how this can be used for monitoring and alerting across your customer tenants.

2 thoughts on “MSP: The well-tempered Azure tenant – Part 4”